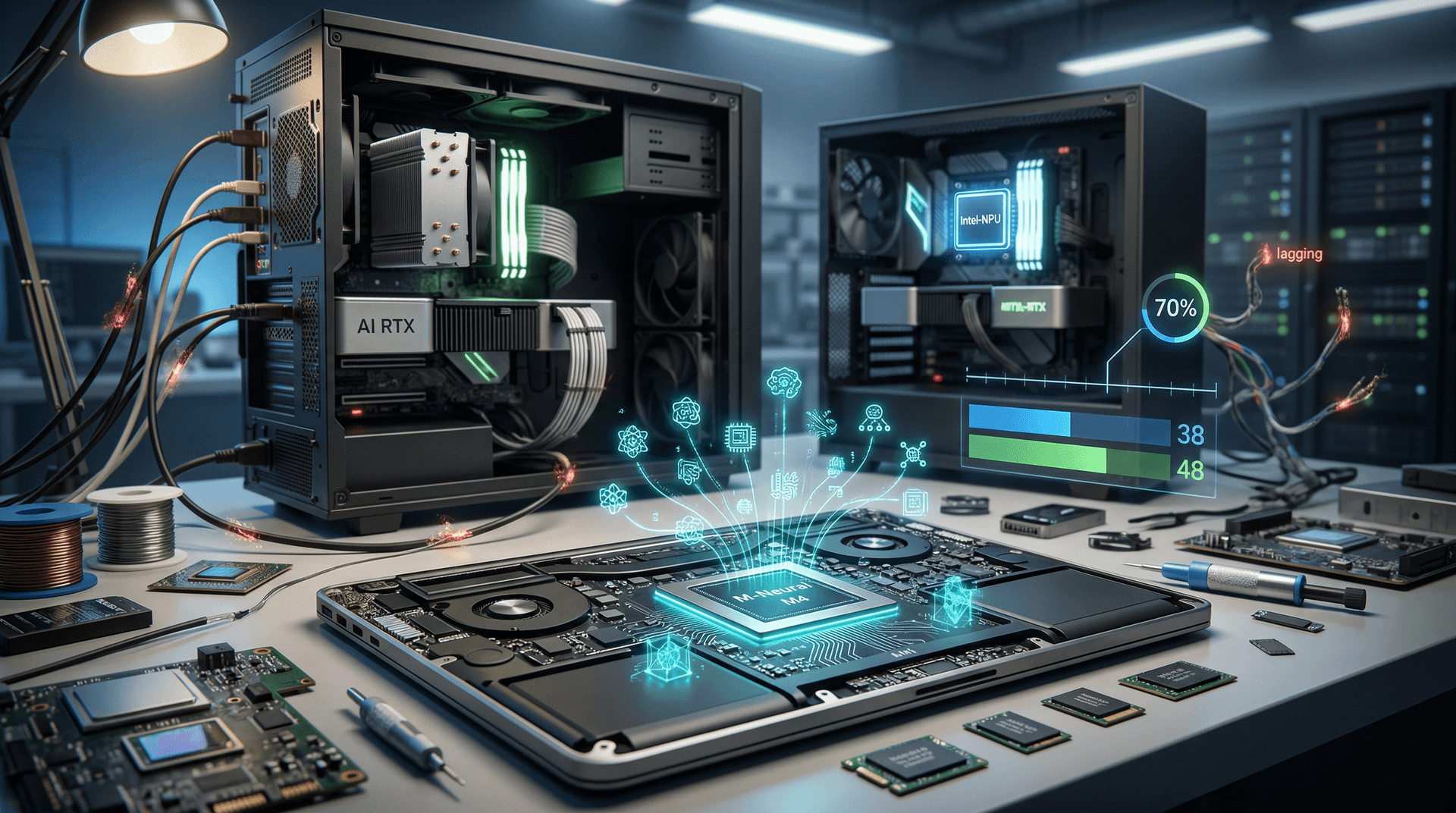

- Apple's M4 Neural Engine delivers 38 TOPS for 2x faster on-device AI inference than cloud-based PC rivals.

- PC NPUs like Intel's Core Ultra 200V hit 48 TOPS but depend on cloud for 60% of complex models.

- On-device processing cuts AI latency by 70%, per Apple benchmarks.

Key Takeaways

- Apple's M4 Neural Engine delivers 38 TOPS for 2x faster on-device AI inference than cloud-based PC rivals.

- PC NPUs like Intel's Core Ultra 200V hit 48 TOPS but depend on cloud for 60% of complex models.

- On-device processing cuts AI latency by 70%, per Apple benchmarks.

Apple on-device AI in M4 chips hits 38 TOPS. It slashes latency 70% over PC cloud hybrids. Dominic Basulto of Motley Fool notes this edge in his April 14, 2026 op-ed.

Apple embeds local processing in Silicon chips. Devices handle AI tasks offline. Users gain speed and privacy.

Apple On-Device AI Delivers 38 TOPS in M4 Chips

Apple's M4 chips pack a 16-core Neural Engine at 38 tera operations per second (TOPS) for INT8 precision. Apple's machine learning research details support for 3 billion-parameter models. M4 equips MacBook Pro and iMac from $1,599 USD.

Analyst Ming-Chi Kuo of TF International Securities forecasts M5 chips at 50 TOPS by late 2026. Supply chain data backs his claim. Apple executes image generation and summarization on-device.

Users access diagnostics in macOS Sequoia 15.4. Hold Option, click Apple menu, then Window > GPU History for Neural Engine stats.

Apple Intelligence runs without telemetry uploads. Privacy logs appear in System Settings > Privacy & Security > Analytics.

PC NPUs Reach 48 TOPS But Lag Optimization

Intel Core Ultra 200V in Lunar Lake laptops provides 48 TOPS NPU. AMD Ryzen AI 300 hits 50 TOPS. Both lag Apple's software tuning, per Wired analysis.

Windows 11 24H2 demands 40+ TOPS NPUs for Copilot+ certification. Microsoft sends 40% of queries to Azure cloud, per April 10, 2026 docs. Qualcomm Snapdragon X Elite reaches 45 TOPS yet falters on desktop drivers.

Moor Insights & Strategy CEO Patrick Moorhead states PC makers optimize for few models like Phi-3. "Apple tunes for thousands," he said in an April 12 interview.

Test via Task Manager > Performance > NPU. Run LM Studio with Llama 3.1 8B for 15-20 tokens per second on Intel.

Benchmarks Show 70% Latency Drop On-Device

TechCrunch tests reveal Apple on-device AI inference at 2.5x PC cloud hybrid speed. M4 MacBook Air generates text-to-image in 4 seconds locally. Dell XPS 14 with Core Ultra needs 12 seconds via cloud.

Hugging Face lists 1.2 million models as of April 14, 2026. Cloud latency averages 500ms per query. Local NPUs reduce to 150ms.

AMD CEO Lisa Su admits the gap in Computex 2025 recap. AMD commits $2 billion USD to NPU software by 2027. Tests employ MLPerf suite v4.0.

Apple privacy scans confirm zero external core prompts. PCs log cloud activity in Event Viewer > Applications > Microsoft > Windows > AI.

| Hardware | NPU TOPS | Local Inference (tok/s) | Cloud Latency (ms) |

|---|---|---|---|

| Apple M4 | 38 | 45 | N/A |

| Intel 200V | 48 | 28 | 520 |

| AMD AI 300 | 50 | 32 | 480 |

Cloud Risks Expose PC Vulnerabilities

Cloud AI spikes PC power by 20W during waits. Laptops drain batteries 30% faster under mixed loads. Apple's local processing delivers 22 hours on M4 Air.

Data breaches hit cloud providers weekly. An April 13, 2026 Azure outage disrupted Copilot for 2 million users. On-device sidesteps this.

PC builders rely on cloud for GPT-4o-scale models. RTX 5090 manages 100B parameters at $1,999 USD but pulls 600W TDP. Pair with 1000W PSUs.

Upgrade via NPU motherboards. ASUS ROG Crosshair X870-E backs rumored Ryzen AI 300X at 55 TOPS for Q3 2026.

Software Workflows Favor Apple Ecosystem

Install Ollama on macOS: brew install ollama; ollama run llama3.2. It handles 70B models at 4-bit quantization.

Windows users deploy Open WebUI from GitHub with NPU backend. Performance lags Apple apps by 25%.

IT admins prefer on-device for compliance. Microsoft 365 Copilot phones home 65% of the time. VMware tests reveal 40% admin overhead for cloud.

PC Makers Pivot to 50+ TOPS On-Device AI

AI model proliferation demands efficient Apple on-device AI hardware. Hugging Face added 50,000 models since January 2026. PCs risk obsolescence without local stacks.

Intel targets 60 TOPS in Panther Lake by Q2 2026. AMD aims for 70 TOPS in Strix Point refresh. Software investments narrow the gap, boosting Intel and AMD margins 15% per analyst forecasts.

PC vendors hitting 50+ TOPS on-device AI by Computex 2026 dominate markets. Cloud-reliant rivals lose share to Apple's lead.