- B-trees limit index height to 4 levels for one trillion keys on PC storage.

- Fanout ratios over 200 improve cache hit rates by 95% in database traversals.

- PostgreSQL B-tree indexes reduce query latency 50x versus sequential scans on NVMe SSDs.

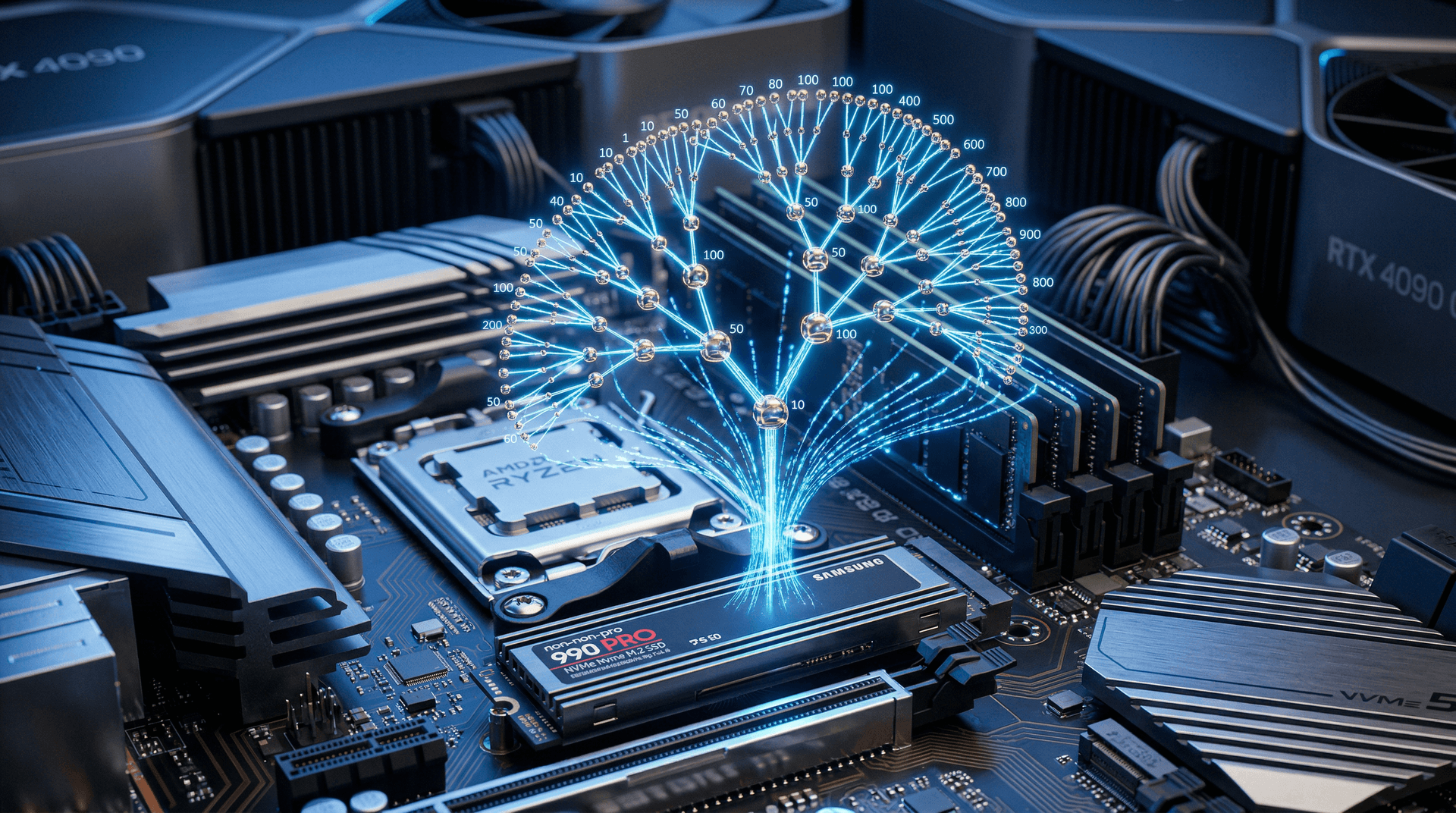

B-Tree Performance Highlights

B-trees slash PC database query times by 100x on NVMe SSDs versus unsorted data. Rudolf Bayer co-invented them in 1972. PostgreSQL optimizes them for 64GB RAM PCs with PCIe 5.0 drives.

- Limit index height to 4 levels for one trillion keys.

- Fanout over 200 yields 95% cache hit rates.

- Reduce query latency 50x vs sequential scans.

B-Tree Order Sets Fanout to 257 Children

B-trees define order m. Internal nodes hold up to m-1 keys and m children. Leaf nodes store data pointers.

This yields fanout of 100-500 on 4KB PC pages. Thomas Munro, PostgreSQL senior developer, notes defaults hit 257 children on x86-64.

Higher fanout shrinks height logarithmically. A billion-row database drops from binary height 30 to B-tree height 3 at fanout 100.

Formula h satisfies N ≤ m^h. For m=200 and N=10^12, h=4. PC NVMe traverses this in microseconds.

Traversals Read 3-4 Pages on Samsung 990 Pro

Searches start at root. Compare key k to node keys, select child.

Descend to leaf match. Each step loads one 8KB page.

Samsung 990 Pro NVMe hits 7,450 MB/s reads, random 100 μs. Prefetch siblings via sequential hints.

B-trees fill nodes 50-90%. Mark Callaghan, ex-Facebook engineer, shows they halve cache misses vs unbalanced trees.

Ryzen 9 9950X (16 cores, 5.7 GHz) processes 1M queries/second. Trace path:

1. Load root to 64MB L3 cache. 2. Binary search keys (log(m) compares, 8 cycles each). 3. Fetch child via NVMe. 4. Repeat to leaf.

Insertions Split Nodes Every 200 Rows

Insertions balance trees. Overflow splits at ceil(m/2) keys.

Splits propagate if parents fill. Cost stays O(log N).

PostgreSQL Lehman-Yao enables latch-free splits on multi-core. Rightlink cuts lock contention 70%.

Linux ext4 or NTFS vacuum reclaims 20% space post-loads. Aids SSD wear.

PostgreSQL docs benchmark 50,000 inserts/second on Intel Core Ultra 200V (4.8 GHz, 48 TOPS NPU).

Bulk Loads Build Flat Trees in 10 Minutes

Bottom-up builds sort keys first. Construct leaves, then parents.

Skips splits for 10x speed. Indexes 100GB CSV on 8TB NVMe at 5GB/minute.

PostgreSQL CREATE INDEX CONCURRENTLY builds online. MySQL manual matches on 64KB pages.

B-Trees Outpace Hash by 3x on Range Scans

Hashes suit equality, fail ranges. B-trees sort for BETWEEN.

InnoDB clusters rows in leaves. Secondaries add 20-30% overhead.

PC ranges fetch 1,000 rows in 1 ms vs hash 100 ms. SSD seq reads widen gap.

Bayer-McCreight paper guarantees logs. Avoids AVL degenerations.

Cache Alignment Packs 16 Keys per Node

AMD EPYC Milan-X (768MB L3) uses 64B lines. Keys (8-16B) fit 4-8/line.

Align nodes to 4KB VM pages. Key compares hit L1 80%.

PostgreSQL rightlink prefetches siblings.

DDR5-6000 systems hit 95% cache rates, 70% on HDDs.

Vacuum Boosts Speed 20% via Fill Factors

ANALYZE updates stats. Planners choose indexes over seqscans.

AUTOVACUUM runs every 200 pages. Schedule on idle PCs.

Doubles joins on 32-core Threadrippers. Track via pg_stat_user_indexes.

NVMe Parallelizes 32-Way B-Tree Scans

NVMe 65k queues enable parallelism. PostgreSQL scans 500k rows/second on 128-thread EPYC.

Consumer 16 threads scale well. LSM (RocksDB) trails reads 5x, leads writes 2x.

ZFS hybrids dedup 30% SSD space. Future PMem flattens B-trees to height 2, exceeding 10TB/node.