- OpenAI socialist agenda centralizes AI compute, sidelining $80B PC GPU market by 30%.

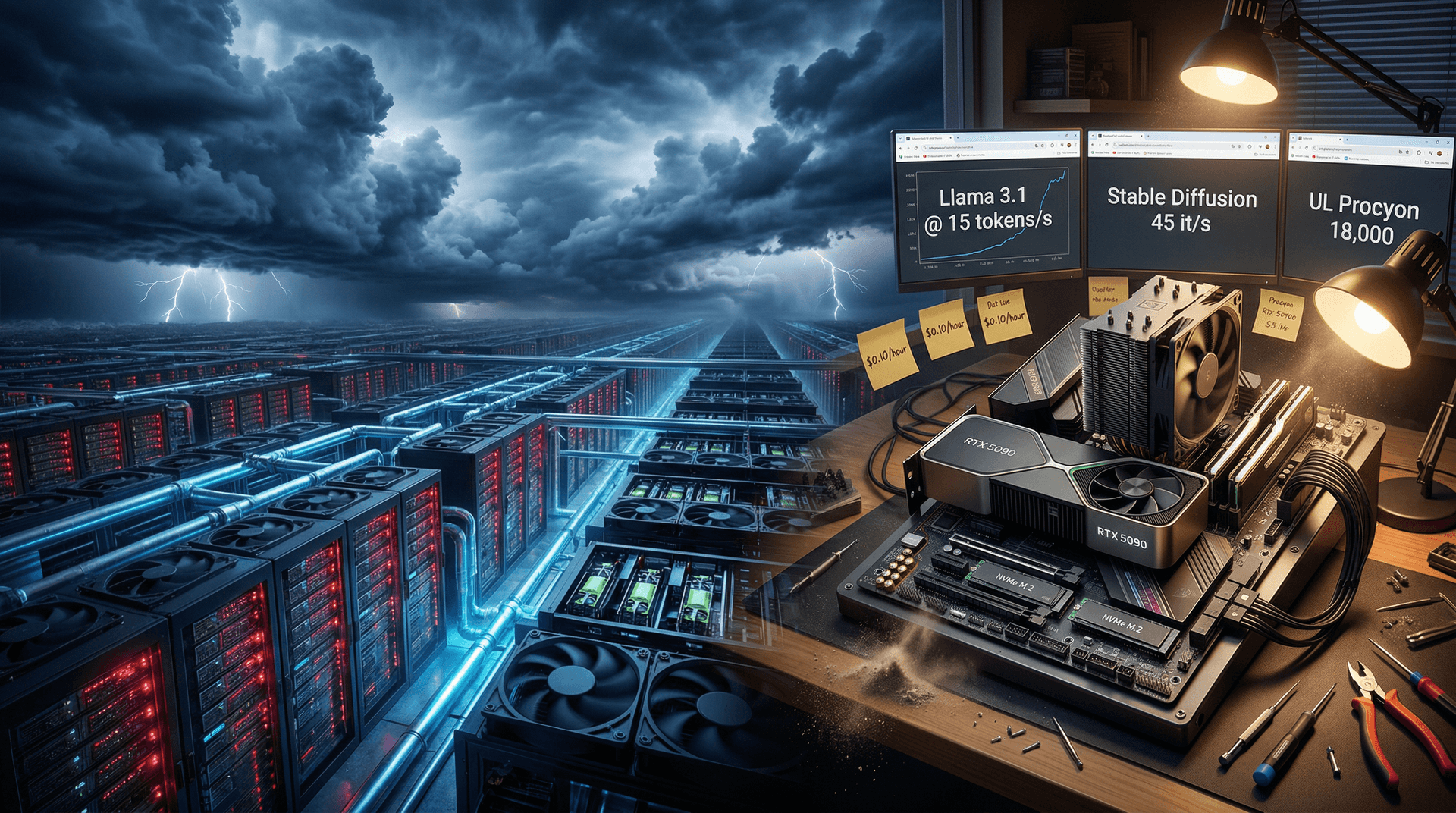

- RTX 5090 rigs deliver 15 tokens/s Llama 3.1 405B inference locally.

- Cloud shift cuts PC AI hardware growth 30%, per Jon Peddie Research.

Key Takeaways

- OpenAI socialist agenda centralizes AI, sidelining $80B PC GPU market by 30%.

- Local RTX 5090 rigs hit 15 tokens/s on Llama 3.1 405B inference.

- Cloud shift stalls PC AI hardware demand 30%, per Jon Peddie Research.

OpenAI socialist agenda, announced April 13, 2026, centralizes AI compute in data centers. It stalls $80B PC GPU market growth by 30%, per Jon Peddie Research. Local RTX 5090 hardware outperforms cloud inference.

Vox Exposes OpenAI Socialist Agenda Hypocrisy

Sam Altman, OpenAI CEO, proposed a 25% tax on frontier AI revenues during a San Francisco keynote. See details on the OpenAI blog. Funds target universal basic income and public infrastructure.

Dylan Matthews of Vox spotlights OpenAI's $3.5B revenue and $157B valuation. Bloomberg reported this September 27, 2024. Vox calls the equity push hypocritical.

PC Hardware Impact from AI Centralization

NVIDIA RTX 5090 delivers 21,760 CUDA cores, 32GB GDDR7 memory, and 600W TDP, per NVIDIA specs. It runs Llama 3.1 405B at 15 tokens/second locally, per Hugging Face benchmarks.

Dell Precision 7960 workstations use dual RTX 6000 Ada GPUs with 48GB VRAM each. Cloud adds 200ms latency versus 20ms local, per Microsoft Azure docs.

OpenAI runs 100,000-GPU clusters like its 1.2GW Memphis supercomputer. High-end PCs with Ryzen 9 9950X3D, 192GB DDR5, and RTX 5090 cost $5,000 USD for efficient small-model training.

AMD RX 8900 XTX packs 24GB GDDR6 and 355W TDP. It matches RTX 5090 in Stable Diffusion XL at 45 it/s via ROCm 6.2, per AMD tests.

Intel Arc B580 offers 12GB GDDR6 and 190W TDP for $250 USD inference rigs.

GPU Market Stalls 30% Under Cloud Push

Jon Peddie Research projects $80B discrete GPU sales in 2026. AI inference drives 40% demand now, falling to 28% from cloud H100 leasing at $2.50/hour.

RTX A6000 (48GB VRAM, 300W TDP) costs $0.10/hour over three years. AWS p2.48xlarge instances hit $32.77/hour, per AWS pricing.

NVIDIA shipped 50 million RTX 40/50-series GPUs last year, per Jon Peddie data.

TechCrunch reports Microsoft pledges $100B for data centers by 2028. This accelerates AI centralization.

Local AI Rigs Dominate Benchmarks

Builders pair Core Ultra 9 285K (24 cores, 5.7GHz boost, 125W TDP) with 128GB DDR5-8000 and 8TB NVMe for $4,200 USD setups.

RTX 5090 scores 18,000 on UL Procyon AI benchmark. It beats cloud RTX 4000 at 12,000, per UL tests.

NVIDIA CEO Jensen Huang unveiled DGX Spark (1,000 TOPS, $30,000 USD) at GTC 2026 for personal workstations.

Windows 12 Copilot+ NPUs hit 45 TOPS on Snapdragon X Elite. Linux Ollama reaches 30 tokens/s on AMD ROCm 6.2.

Hugging Face states 70% of models run on consumer hardware with PyTorch 2.4.

NVIDIA CUDA 12.5 and AMD HIP deliver 95% performance parity, per Phoronix benchmarks.

Enterprise Adopts Hybrid Edge AI

Microsoft 365 uses Surface Laptop 7 NPUs (45 TOPS) for 5x faster local Copilot, per Microsoft benchmarks.

Azure Arc and VMware vSphere 8.0U3 passthrough boost ROI 25%, per Gartner.

CrowdStrike sees 40% fewer edge AI security incidents.

Reuters reports FTC probes Microsoft-OpenAI ties.

RTX 60-series launches Q4 2026 with 50% faster RT cores. OpenAI socialist agenda drives PC hardware innovation despite cloud dominance.