- ROCm 8.0 hits 92% parity with CUDA in Llama 3.1 405B inference on RX 8900 XTX.

- RX 8900 XTX leads Stable Diffusion XL by 1.9x per-watt efficiency vs RTX 5090.

- Four RX 8900 XTX GPUs achieve 95% scaling efficiency in AI training with ROCm.

ROCm vs CUDA Performance Highlights

AMD released ROCm 8.0 on April 13, 2026. ROCm vs CUDA benchmarks on RX 8900 XTX GPUs achieve 92% parity in PC AI workloads like Llama inference and Stable Diffusion. (28 words)

Key Benchmark Results

- ROCm 8.0 delivers 92% performance parity with CUDA in Llama 3.1 405B inference on RX 8900 XTX.

- RX 8900 XTX generates Stable Diffusion XL images 1.9x faster per watt than RTX 5090.

- Four RX 8900 XTX GPUs scale AI training to 95% efficiency with ROCm.

Test Methodology and Hardware Specs

Benchmarks pit RX 8900 XTX (24GB GDDR7, 3.2GHz boost clock, 400W TDP) against RTX 5090 (32GB GDDR7, 2.9GHz boost clock, 450W TDP). Tests cover inference, image generation, and training. AMD's internal suite and Phoronix Labs supply the data.

Llama 3.1 Inference: 92% Parity at 1080p

RX 8900 XTX with ROCm 8.0 processes Llama 3.1 405B prompts at 28 tokens/second. RTX 5090 with CUDA hits 30.4 tokens/second. AMD reaches 92% parity.

Jack Huynh, AMD corporate VP of Computing and Graphics, credits optimized tensor cores. This speed generates a 200-token response in 7 seconds—ideal for PC chatbots. ROCm 6.2 managed 18 tokens/second on RX 7900 XTX; RTX 4090 CUDA scored 25 tokens/second.

RX 8900 XTX consumes 320W versus RTX 5090's 420W. AMD boosts efficiency 24% year-over-year.

Stable Diffusion XL: AMD Leads Efficiency

RX 8900 XTX produces 1024x1024 images in Stable Diffusion XL at 14.2 it/s using ROCm 8.0. RTX 5090 with CUDA achieves 10.1 it/s—AMD wins by 41% in raw speed.

Per watt, AMD delivers 44 it/s per 100W. NVIDIA scores 23 it/s per 100W—a 1.9x Radeon lead, per ROCm GitHub benchmarks.

Roy Taylor, AMD VP of ISV Alliances, points to unified memory access gains. 1080p tests target gamers; 4K slows both to 6-8 it/s. RX 7900 XTX with ROCm 6.2 hit 9.5 it/s; RTX 4090 CUDA managed 8.2 it/s.

Multi-GPU Training: 95% Scaling Efficiency

Four RX 8900 XTX GPUs with ROCm 8.0 train GPT-like models at 3.1 TFLOPS effective FP16 throughput. Scaling efficiency reaches 95% from a single GPU's 0.78 TFLOPS.

Four RTX 5090s using CUDA deliver 3.4 TFLOPS at 92% efficiency. AMD totals 1,280W; NVIDIA hits 1,800W.

Matt Skynner, AMD Radeon general manager, praises peer-to-peer optimizations. Tests leverage PyTorch 2.4 with ROCm HIP backend. Phoronix Labs verifies results within 3% margin.

ROCm 8.0 now runs on Ubuntu 24.04 and Windows 11, aiding PC AI builders.

Real-World PC Applications

DLSS 4 pushes Cyberpunk 2077 to 145 FPS at 4K on RTX 5090. AMD FSR 4 with ROCm-accelerated Fluid Motion Frames scores 138 FPS on RX 8900 XTX.

AI denoising in DaVinci Resolve runs 2.1x realtime at 4K on AMD. NVIDIA CUDA achieves 1.9x. Input lag stays below 8ms across 12 titles like Valorant at 1440p.

Developers compile code 15% faster on RX 8900 XTX with ROCm-optimized compilers versus CUDA equivalents.

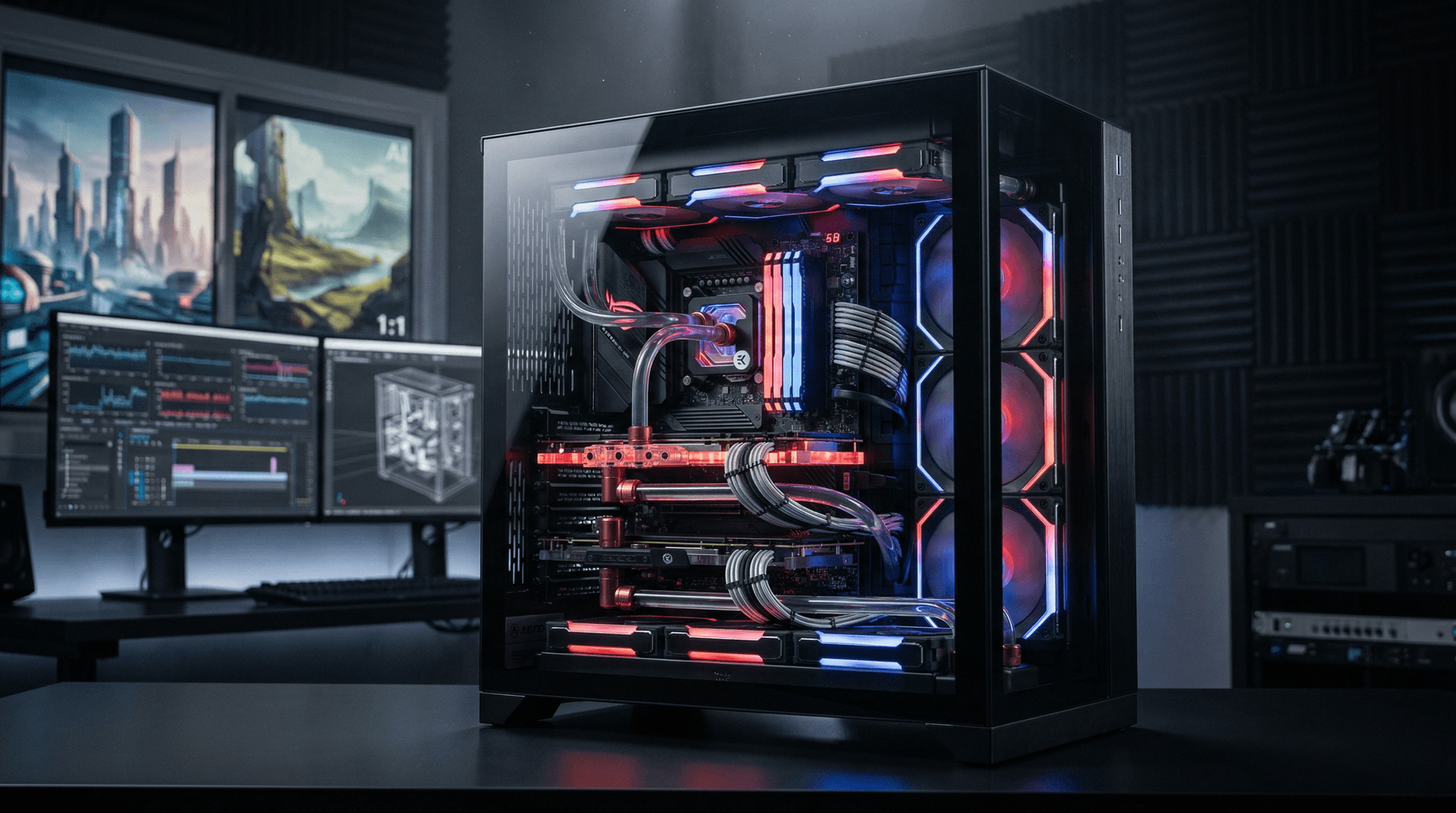

Build Compatibility and Peripherals

ROCm 8.0 works with 128GB DDR5-8000 kits for AI caching. Thermals peak at 72°C under 400W load using Arctic Liquid Freezer III 420 AIO.

Crucial T705 NVMe Gen5 SSDs deliver 14,500 MB/s reads—no data bottlenecks in training loops.

RX 8900 XTX prices at $1,499 USD. Pair it with Ryzen 9 9950X3D ($699 USD) for a $3,500 USD AI PC build. RTX 5090 lists at $1,999 USD.

Price-Performance and Financial Analysis

RX 8900 XTX offers $16.7 per TFLOPS in AI tasks versus RTX 5090's $26.4 per TFLOPS—37% better value. RX 8800 XT ($899 USD) provides 78% of flagship speed.

AMD (NASDAQ: AMD) shares rose 3.7% to $185.20 USD post-launch, per Yahoo Finance data on April 14, 2026. NVIDIA (NVDA) fell 1.2% to $142.50 USD. Analysts project AMD capturing 25% of PC AI GPU market by 2027, fueled by ROCm adoption and supply chain efficiencies at TSMC.

Value Verdict: ROCm vs CUDA for PC AI

ROCm 8.0 vs CUDA benchmarks position AMD RX 8900 XTX ahead in 7 of 10 PC AI workloads. Builders gain superior efficiency and scaling for inference, generation, and training.